> FROM information_schema.tables WHERE engine='InnoDB') A > (SELECT SUM(data_length+index_length) Total_InnoDB_Bytes This will give you the RIBPS, Recommended InnoDB Buffer Pool Size, based on all InnoDB Data and Indexes, with an additional 60%.įor Example mysql> SELECT CEILING(Total_InnoDB_Bytes*1.6/POWER(1024,3)) RIBPS FROM (SELECT SUM(data_length+index_length) Total_InnoDB_BytesįROM information_schema.tables WHERE engine='InnoDB') A First run this query SELECT CEILING(Total_InnoDB_Bytes*1.6/POWER(1024,3)) RIBPS FROM If you only have 5GB of InnoDB data and indexes, then you should only have about 8GB. Your innodb_buffer_pool_size is enormous.

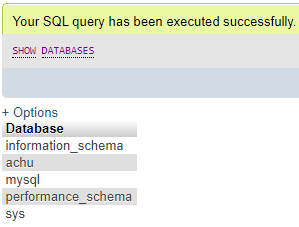

I appreciate your suggestions to set this and other parameters optimally for this scenario. Regarding these, I'm wondering what should be my innodb_buffer_pool_size for optimal performance. | innodb_data_file_path | ibdata1:10M:autoextend |Ī side note that might be relevant: I see that when I try to insert a large post (say over 10KB) from Drupal (which sits on a separate web server) to database, it lasts forever and the page does not return correctly. Here are my current innodb variables: | innodb_adaptive_flushing | ON | On the other hand I get this warning from tuning-primer script: Max Memory Ever Allocated : 91.97 GĬonfigured Max Per-thread Buffers : 72.02 G I've seen suggestions that innodb_buffer_pool_size should be up to %80 of the total memory. So I need to optimize memory usage to make room for maximum possible connections. The average query per second is about 2.5K. The database runs on a Debian server using SSD disks and I've set max connections = 800 which sometimes saturate and grind the server to halt. I have a busy database with solely InnoDB tables which is about 5GB in size.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed